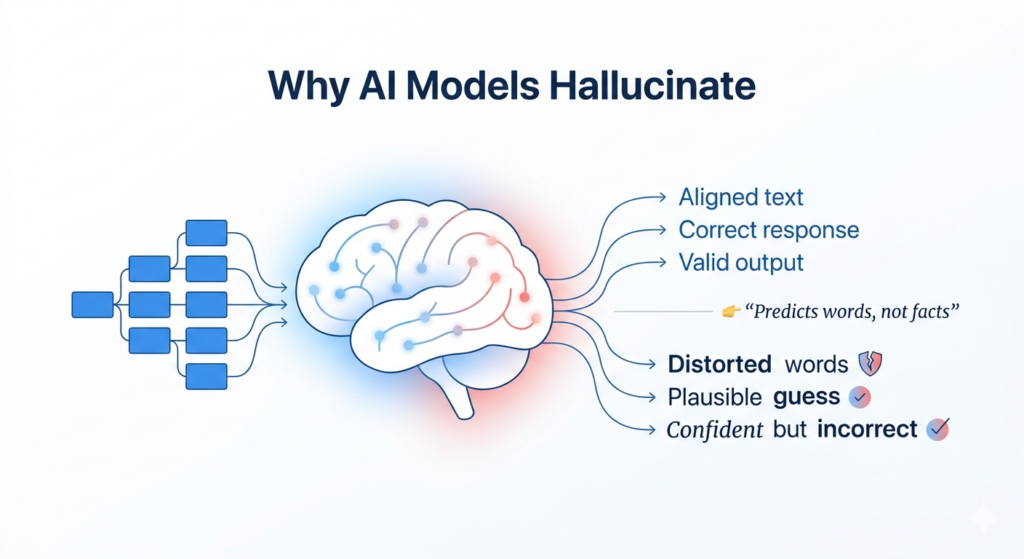

AI models hallucinate because they generate probabilistic responses without verifying factual accuracy. When context is incomplete, ambiguous, or out-of-distribution, they produce confident but incorrect outputs due to lack of grounding, memory limitations, and absence of real-time evaluation.

Key reasons:

Key reasons why AI models hallucinate include:

- Next-Token Prediction: Large Language Models (LLMs) do not truly understand facts or reality. They work by predicting the most likely next word based on patterns learned from training data.

- Limited or Poor Training Data: If the model has not been trained on accurate or complete information, it may generate incorrect but believable answers to fill the gaps.

- Biased Training Data: AI models can inherit and amplify biases from their training data, sometimes creating false patterns or misleading conclusions that leads to hallucinate.

- Rewarded for Always Answering: Many models are trained to respond to every prompt, which can encourage confident guessing instead of admitting uncertainty.

- Over-Optimization for Fluency: In trying to produce highly polished responses, models may prioritize what sounds most probable rather than what is actually correct.

In simple terms:

AI doesn’t “know” facts, it predicts the most likely next word based on patterns.

Technically:

Large Language Models optimize for probability, not factual correctness, which leads to confident but hallucinate outputs.

In production systems:

Hallucinations increase when multi-step workflows, memory gaps, and lack of evaluation layers compound errors.

What hallucinations really means in AI?

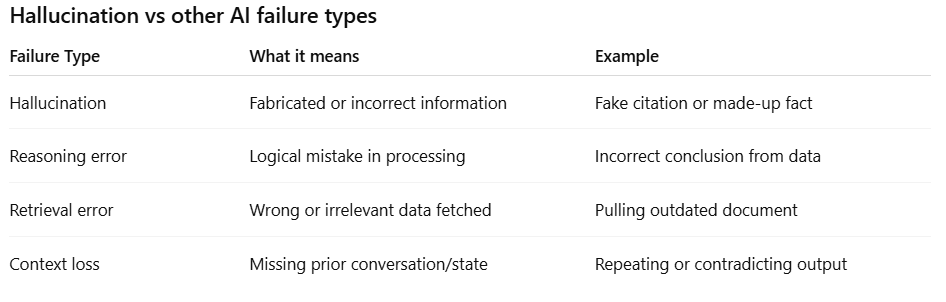

A hallucination occurs when an AI system generates:

- Factually incorrect information

- Fabricated references or data

- Misleading or unverifiable claims

The challenge is that these outputs often appear highly confident and well-structured, making them difficult to detect without verification.

In real-world deployments, AI systems often produce confident but incorrect outputs when operating under uncertainty, making hallucination detection and evaluation critical for reliability.

Why this happens?

1. Probability-based generation

LLMs predict the next word based on patterns in training data. They are not designed to verify facts or check correctness.

2. No built-in fact-checking

Unless connected to external systems, the model cannot validate whether its output is accurate.

3. Training objective mismatch

Models are optimized for:

- Fluency

- Coherence

- Relevance

Not for:

- Truth

- Accuracy

- Verification

4. Lack of uncertainty handling

Most models are not trained to say “I don’t know,” leading them to generate hallucinate answers even when unsure.

Hallucinations can create serious risks in production systems:

Why hallucinations increase in production AI systems ?

- Multi-step agent workflows amplify small errors

- Context is lost across interactions or tools

- Retrieval systems return incomplete or irrelevant data

- No real-time evaluation or feedback loop exists

- Systems lack traceability to detect failure points

How to reduce hallucinations in production systems ?

Hallucinations cannot be fully eliminated at the model level, they must be controlled at the system level.

Modern AI systems reduce hallucinations by adding:

- Real-time output evaluation

- Context validation and memory tracking

- Traceability across multi-step workflows

- Guardrails for unsafe or inconsistent outputs

Platforms like LLUMO AI act as a reliability layer, enabling teams to detect, measure, and reduce hallucinations before they impact users.

👉 Want a deeper breakdown of how AI reliability impacts real-world systems?

Read the full AI Reliability Whitepaper → https://try.llumo.ai/reliability-what-why-how-ai-reliability-whitepaper/

Want to fix AI reliability in production?

👉 Start with LLUMO AI and monitor, evaluate, and improve your AI systems in real time.

Key insights

- Hallucination is a structural limitation, not a temporary issue

- Confidence in AI outputs is not a reliable signal

- Prompt engineering alone cannot solve hallucinations

- Reliable systems require validation layers

Real-world example

A legal AI assistant generates a case citation that appears valid in format and tone. However, when checked, the case does not exist in any database. The output is convincing enough to pass unnoticed without manual verification.

Related topics

👉 /how-to-detect-AI-hallucinations

👉 /how-to-improve-AI-reliability

👉Download the complete AI Reliability Whitepaper

FAQ

Why do AI models hallucinate?

AI models hallucinate because they predict likely outputs rather than verifying factual correctness, especially when context is missing or unclear.

Can AI hallucinations be completely eliminated?

No, but they can be significantly reduced using evaluation layers, better context handling, and system-level guardrails.

Why are hallucinations more common in production systems?

Because real-world inputs are unpredictable, and multi-step workflows increase the chance of compounded errors.

How do you detect hallucinations in AI systems?

By implementing real-time evaluation, output validation, and traceability across the system.