Introduction: The Gap Between AI Demos and AI That Actually Works

Let me tell you a story I hear almost every week.

A CTO walks into a board meeting, laptop open, ready to show off the AI system their team has spent six months building. The demo is flawless. The board is impressed. Budget gets approved. Engineering doubles down.

Three months later, the same system is live in production, and it’s hallucinating customer data, routing support tickets incorrectly, producing responses that make no sense, and occasionally generating outputs that could damage trust publicly. The CTO is back in the boardroom, this time without the laptop.

This story is not about poor engineering. It’s about a gap nobody prepared enterprises for, the gap between an AI system that works in controlled environments and one that performs reliably in the real world, at scale, under pressure, with unpredictable users and changing business conditions.

That gap has a name: The AI Reliability Problem.

And solving it is exactly why LLUMO AI was built.

In this article, I want to give you a complete and honest map of the AI reliability landscape. We’ll start with the fundamentals, key concepts explained simply, and then go deeper into the failures, operational risks, and infrastructure required to transform AI from a demo into a dependable production system.

Whether you’re a business leader trying to understand enterprise AI, an engineer dealing with failures in production, a product manager shipping AI experiences, or a CTO responsible for business outcomes, this is for you.

The Foundations: Understanding the Key Concepts

What Is AI?

Let’s begin at the start, because the term “AI” is often used so broadly that it risks losing meaning.

Artificial Intelligence (AI) is the field of building systems that can perform tasks that typically require human intelligence, understanding language, analyzing data, generating content, making decisions, recognizing patterns, and solving problems.

The enterprise AI systems creating the most value today are largely powered by Large Language Models (LLMs). These systems are trained on massive amounts of text and learn patterns of language, reasoning, and knowledge.

They can write code, summarize reports, answer questions, search information, generate workflows, and support employees or customers at scale.

Think of an LLM as a highly capable generalist that has read an enormous amount of information.

But there is one critical distinction:

Fluency is not the same as correctness.

And correctness in a demo is not the same as reliability in production. That distinction sits at the center of the modern enterprise AI challenge.

What Are AI Agents?

An AI agent is an AI system that doesn’t just generate answers, it takes actions. A chatbot gives responses. An agent can reason, decide, use tools, retrieve data, call APIs, write files, send messages, or trigger workflows.

Agents can:

• Search internal knowledge bases

• Query databases and APIs

• Write and execute code

• Send emails or notifications

• Update records or documents

• Use business tools like CRM or ticketing systems

• Make decisions based on outcomes

Most agents operate in a loop:

Goal → Reason → Act → Observe → Adjust

This makes them powerful. It also makes reliability far more important, because once AI moves from text generation to real actions, the cost of mistakes increases dramatically.

What Is Multi-Agent Orchestration?

When one agent is useful, multiple specialized agents working together can be transformative. But coordinating many agents is exponentially harder than managing one. That discipline is called multi-agent orchestration.

Think of it like an orchestra:

Each musician has expertise. But without a conductor, timing breaks, signals conflict, and noise replaces performance.

In enterprise AI systems, you may have:

• A planner agent that breaks work into tasks

• A researcher agent that gathers information

• A coding agent that writes automation

• A validator agent that checks quality

• A communicator agent that prepares final output

• A supervisor that routes work and resolves conflicts

When orchestration is weak, enterprises experience:

• Contradictory outputs

• Missed handoffs

• Infinite loops

• Tool misuse

• Rising latency

• Hidden failure chains

This is why orchestration is becoming one of the most important infrastructure layers in enterprise AI.

What Is AI Evaluation?

AI evaluation is the process of systematically measuring whether AI is actually doing what you need it to do. Unlike traditional software, AI outputs are probabilistic and context dependent. The same prompt may produce different results. “Correctness” is often nuanced.

Good AI evaluation answers:

• Is the output accurate?

• Is it relevant to user intent?

• Is reasoning sound?

• Is it safe and policy compliant?

• Are tools used correctly?

• Did the workflow complete the task?

• Is quality improving or degrading over time?

At LLUMO AI, we believe evaluation must be continuous, before launch, during production, and after every model or workflow change. Without AI evaluation, teams are operating on assumptions.

What Are Guardrails in AI?

Guardrails are controls that prevent AI systems from doing what they should not do. Think of them as safety systems for probabilistic software.

Guardrails can:

• Block hallucinated outputs

• Prevent unsafe responses

• Stop unauthorized tool access

• Restrict actions beyond scope

• Escalate uncertain decisions to humans

• Enforce compliance and policy rules

Modern AI guardrails often include both deterministic rules and AI-based evaluators.

Without guardrails, an autonomous AI system may be intelligent, but unsafe to trust.

What Is AI Reliability?

AI reliability means an AI system performs consistently, safely, and predictably in real production environments.

A reliable AI system:

• Produces quality outputs repeatedly

• Handles edge cases gracefully

• Is observable and debuggable

• Maintains standards under scale

• Improves through feedback loops

• Operates within business constraints

Reliability is what separates AI experiments from enterprise systems.

And increasingly, it is becoming the true competitive moat in AI.

Why Multi-Agent Systems Fail in the Real World?

Many teams successfully build agent demos. Far fewer successfully run them in production.

Why?

Because real environments introduce:

• Ambiguous inputs

• Incomplete data

• Latency constraints

• Tool outages

• Human exceptions

• Policy boundaries

• High concurrency

• Continuous change

Common failure patterns include:

1. Cascading Hallucinations

One agent creates a false assumption. Downstream agents treat it as fact. Final output looks polished, but wrong.

2. Tool Misuse

The agent calls the right tool incorrectly: wrong SQL query, wrong parameter, wrong customer account, wrong action.

3. Infinite Loops

Agents retry endlessly or wait on each other.

4. Context Loss

Long workflows exceed memory windows. Earlier instructions are forgotten.

5. Silent Failures

No crash occurs. Quality simply drops.

These are exactly the categories enterprise teams must detect before users do.

Why Evaluation Is the Foundation of AI Reliability?

Traditional software testing checks deterministic outputs.

AI systems require behavioral measurement.

That means evaluating:

• Accuracy

• Groundedness

• Task completion

• Consistency

• Safety

• Tool correctness

• Latency vs quality tradeoffs

• Drift over time

At LLUMO AI, we built this philosophy into our platform through Eval360, a specialized SLM-as-a-Judge trained on 2M+ real-world agent evaluations.

Why does this matter?

Because generic LLM judges are expensive and inconsistent.

Purpose-built evaluators deliver faster signals, lower cost, and better reliability insights.

That means teams can detect issues before customers do.

Why Guardrails Are No Longer Optional?

Many teams still think guardrails reduce AI capability.

That misses the point.

Capability without control creates risk.

The right question is not:

Can the model do this?

The real question is:

Can the model do this safely, repeatedly, and under governance?

Effective guardrails include:

Input Guardrails

Detect malicious prompts, prompt injection, unsafe requests.

Output Guardrails

Catch hallucinations, toxic content, policy violations.

Action Guardrails

Require approval before refunds, transactions, data changes, or sensitive actions.

Workflow Guardrails

Detect abnormal sequences, resource spikes, or suspicious agent behavior.

This is how enterprises move from experimentation to trust.

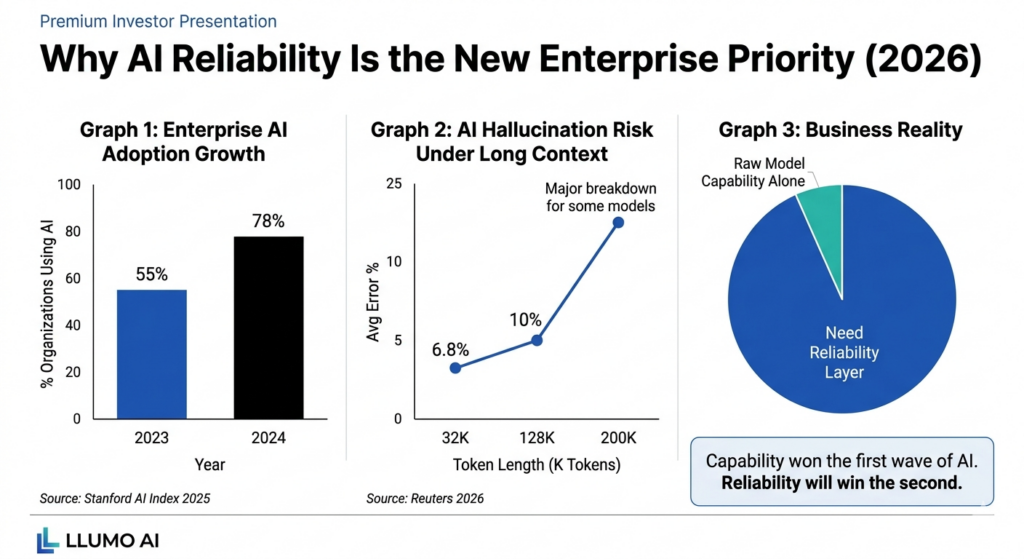

Why AI Reliability Is the New Competitive Frontier?

18 months ago, leaders asked:

Can AI do this task?

Now they ask:

Can AI do this task reliably enough to run my business?

That shift changes everything.

The winners in enterprise AI will not simply have the smartest model.

They will have:

• The most reliable workflows

• The fastest debugging cycles

• The strongest governance

• The safest deployments

• The clearest performance visibility

Reliability is now a growth accelerator, not just a risk function.

How LLUMO AI Solves This

We built LLUMO AI as the reliability layer for modern AI systems.

Real-Time AI Evaluation

Continuously measure output quality, safety, completion, groundedness, and drift.

Multi-Agent Workflow Monitoring

Track agent-to-agent interactions, tool calls, handoffs, bottlenecks, and breakdowns.

Failure Detection & Debugging

Know what broke, why it broke, and how to fix it quickly.

Intelligent Guardrails

Prevent hallucinations, misuse, unsafe outputs, and broken workflows before impact.

Reliability Scoring

Objective performance signals leadership teams can trust.

Continuous Improvement Loops

Use production signals to optimize prompts, workflows, and agent behavior continuously. Most observability tools tell you something failed after damage. We help you understand failure before production impact.

The Roadmap for Enterprise Leaders

Phase 1: Instrument Everything

If you cannot see it, you cannot improve it.

Phase 2: Define Reliability Metrics

Accuracy alone is not enough.

Phase 3: Evaluate Before Optimizing

Measure first. Tune second.

Phase 4: Add Risk-Based Guardrails

Protect what matters most first.

Phase 5: Build Feedback Loops

Every failure should create learning.

Phase 6: Establish Governance

Reliable AI is as much organizational discipline as technical architecture.

Frequently Asked Questions

Q. What is AI reliability?

AI reliability is the ability of AI systems to consistently perform accurately, safely, and predictably in production environments.

Q. Why do AI agents fail in production?

AI agents fail due to hallucinations, tool misuse, context loss, orchestration errors, latency issues, and lack of guardrails.

Q. What are AI guardrails?

AI guardrails are controls that prevent unsafe outputs, hallucinations, unauthorized actions, and policy violations.

Q. What is AI evaluation?

AI evaluation is the process of measuring AI output quality, correctness, safety, consistency, and workflow performance.

Q. What is multi-agent orchestration?

Multi-agent orchestration is the coordination of multiple AI agents working together across tasks, tools, and workflows.

Q. How can enterprises reduce AI hallucinations?

Use grounded retrieval systems, response evaluation, confidence scoring, output filters, and human review for high-risk tasks.

Q. Why is AI observability not enough?

Observability shows what happened. Reliability explains impact, risk, quality, and how to improve systems.

Q. How does LLUMO AI help?

LLUMO AI helps teams evaluate outputs, monitor workflows, detect failures, enforce guardrails, and improve production AI systems.

Conclusion: The Next Chapter of Enterprise AI Belongs to the Reliable

We are entering a new era. The question is no longer whether AI is impressive. It is whether AI is dependable.

The organizations that solve reliability first will deploy faster, scale further, and earn more trust than competitors still stuck in demo mode. At LLUMO AI, we believe AI reliability is the most important infrastructure challenge of this decade. Because trusted AI is what unlocks real business transformation. Not demos. Not hype. Just AI Reliability.